{{errorMessage}}

Upload your own picture (jpeg) or use a sample:

What is this?

This is a Convolutional Neural Network (CNN) model trained using FER+ dataset to recognize 8 different emotion in faces - anger, contempt, fear, disgust, happiness, neutral, sadness, surprise. The model was proposed in Barsoum et. al., trained with MS Cognitive Toolkit and exported to the Open Neural Network eXchange (ONNX)] format. This model is currently deployed using MXNet Model Server (MMS) hosted on AWS Fargate.

The code for the web demo of the model can be found here, training code here

How does it work?

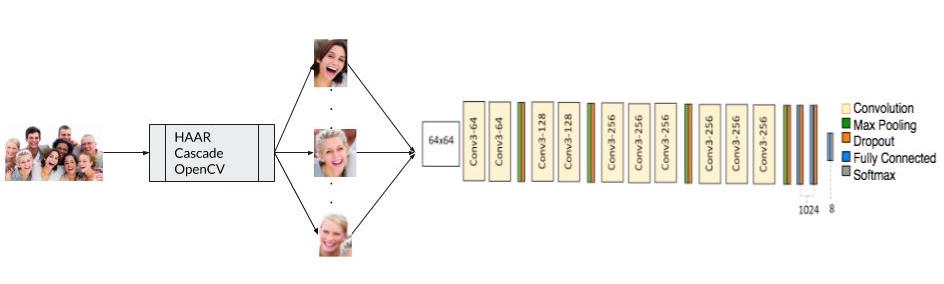

Figure 1: Working of the Emotion FER+ demo.

Emotion recognition is an image classification problem. Haar-cascade detection from the OpenCV library is first used to extract the faces in the image. The extracted faces are converted into 64x64 grayscale images and passed to a custom VGGNet model. Performing a softmax on the output of the final layer of the VGGNet produces a probability distribution on 8 emotion labels, neutral, happiness, surprise, sadness, anger, disgust, fear and contempt.

The Emotion FER+ model is trained to minimize the cross entropy loss presented in Equation 1

where the label distribution is the target. In Equation 1, for each image i of the N images and each label

k of the 8 labels, p is a binary value indicating whether the image belongs to that label (1) or not (0) and `q`

is the model's guess of the probability that the image belongs to that label.

Click here for a detailed tutorial on using the FER+ model with MXNet Gluon

Resources

Acknowledgement

Questions?